On Monday, the U.K.’s internet regulator, Ofcom, has officially released the initial set of final guidelines for online service providers, in compliance with the Online Safety Act. These guidelines mark the beginning of the countdown towards the first compliance deadline of the comprehensive online harms law, which Ofcom anticipates will come into effect in three months.

Ofcom has faced pressure to expedite the implementation of the online safety framework following social media-fueled riots in the summer. The regulator is strictly adhering to the legislative process outlined by lawmakers, which entails consulting on and obtaining parliamentary approval for the final compliance measures.

“This decision on the Illegal Harms Codes and guidance represents a significant milestone, as online providers are now legally obligated to safeguard their users from illegal harm,” stated Ofcom in a press release. Online providers must now assess the risk of illegal harms on their platforms, with a deadline set for March 16, 2025. Following the completion of the Parliamentary process, starting from March 17, 2025, providers must implement the safety measures stipulated in the Codes or adopt other effective measures to shield users from illegal content and activities.

Ofcom emphasized its readiness to take enforcement action against providers who fail to promptly address risks on their platforms. Over 100,000 tech companies could fall under the law’s purview, tasked with protecting users from various types of illegal content related to the Act’s specified “priority offences,” which encompass terrorism, hate speech, child sexual abuse, fraud, and financial crimes. Non-compliance could result in fines amounting to up to 10% of global annual turnover or £18 million, whichever is higher.

In-scope firms encompass a wide spectrum from tech behemoths to small service providers across sectors like social media, dating, gaming, search engines, and adult content. The Act’s obligations apply to service providers with ties to the UK, irrespective of their global location, potentially impacting over 100,000 online services. Ofcom’s consultation, informed by research and stakeholder feedback, shaped the codes and guidance, following the Act’s passage in parliament and its enactment in October 2023.

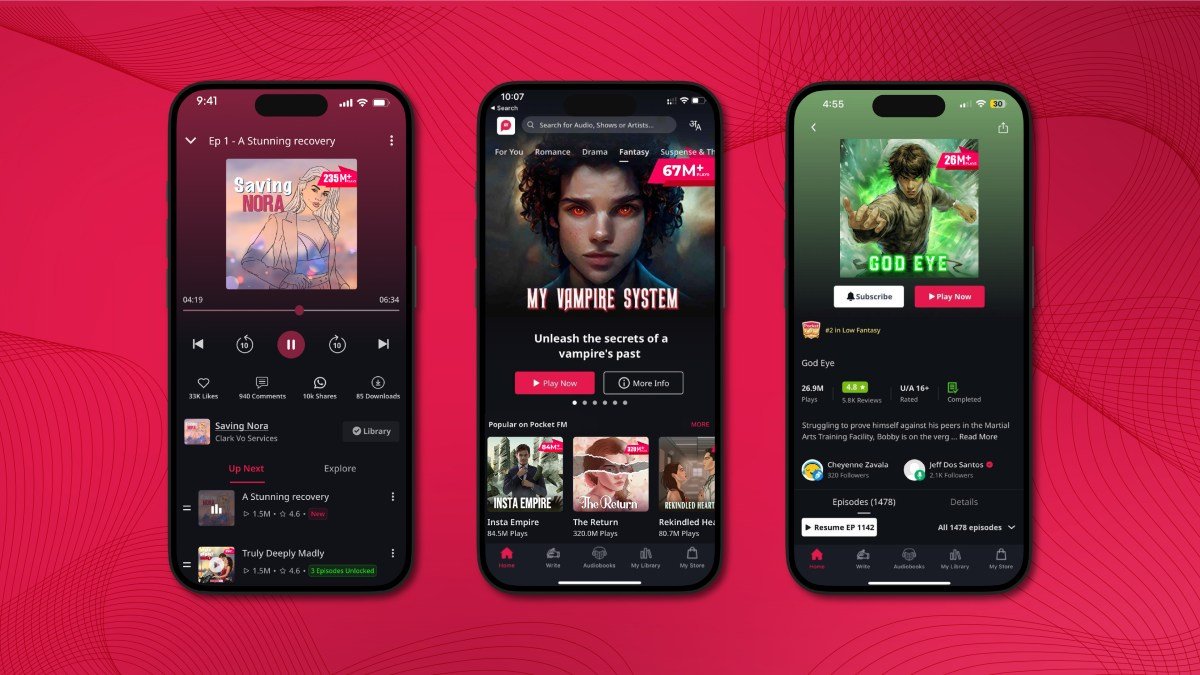

The regulatory measures set forth by Ofcom cater to user-to-user and search services, outlining guidelines on risk assessments, record-keeping, and reviews. The UK law adopts a tailored approach, imposing more stringent obligations on larger services and platforms experiencing multiple risks, in contrast to smaller services with fewer risks. Nonetheless, smaller services are not exempt from obligations, as several requirements are universal, such as maintaining a content moderation system for swift removal of illegal content, offering mechanisms for users to report content issues, and ensuring transparent terms of service.

Every tech firm providing user-to-user or search services in the UK must evaluate the law’s applicability to their operations and potentially make operational adjustments to address regulatory risks. Larger platforms reliant on user engagement for monetization may need more substantial operational changes to uphold their duties to protect users from various harms, as stipulated by the law. Notably, the law introduces criminal liability for senior executives in specific scenarios, implying that tech CEOs could be personally liable for certain non-compliance instances.

Ofcom’s CEO, Melanie Dawes, indicated on BBC Radio 4’s Today program that significant changes are anticipated in how major tech platforms operate by 2025. She emphasized the necessity for tech companies to modify their algorithms to prevent the dissemination of illegal content like terrorism, hate speech, and intimate image abuse. The forthcoming months are poised to bring fundamental shifts in online safety practices, as companies navigate the regulatory landscape to ensure compliance and user protection.

“And if things slip through the net, they have to take it down. And for children, we want their accounts to be set to be private, so they can’t be contacted by strangers,” she added.

Ofcom’s policy statement is just the beginning of its legal requirements, with the regulator still working on further measures and duties in relation to other aspects of the law — including “wider protections for children” that will be introduced in the new year.

More substantive child safety-related changes to platforms that parents have been calling for may not come until later in the year.

“In January, we’re going to come forward with our requirements on age checks so that we know where children are,” said Dawes. “And then in April, we’ll finalize the rules on our wider protections for children — addressing pornography, suicide and self-harm material, violent content, and ensuring that harmful content is not accessible to kids in the way that has become so normalized today.”

Ofcom’s summary document also mentions that further measures may be needed to keep pace with technological advancements such as generative AI, indicating that requirements for service providers may continue to evolve.

The regulator is also planning to establish “crisis response protocols for emergency events” like last summer’s riots; proposals to block the accounts of individuals who have shared child sexual abuse material; and guidelines for using AI to combat illegal activities.