Healthcare giant Optum recently limited access to an internal AI chatbot used by employees after a security researcher discovered it was publicly accessible online. The chatbot, known as “SOP Chatbot,” allowed employees to ask questions about patient health insurance claims and disputes in accordance with the company’s standard operating procedures (SOPs).

Exposure to the internet

The chatbot’s inadvertent exposure raised concerns, especially as its parent company, UnitedHealth, faces scrutiny for using artificial intelligence tools to influence medical decisions and deny patient claims. Mossab Hussein, chief security officer at spiderSilk, brought attention to the publicly exposed chatbot, which was hosted on an internal Optum domain but accessible without a password through its IP address.

Demo tool

Optum clarified that the chatbot was a demo tool developed to test how it responds to questions based on a small sample set of SOP documents. The chatbot was trained on internal Optum documents to provide answers related to claim handling procedures, although it did not contain protected health information. Despite being used by employees hundreds of times since September, the tool was never put into production and has since been made inaccessible from the internet.

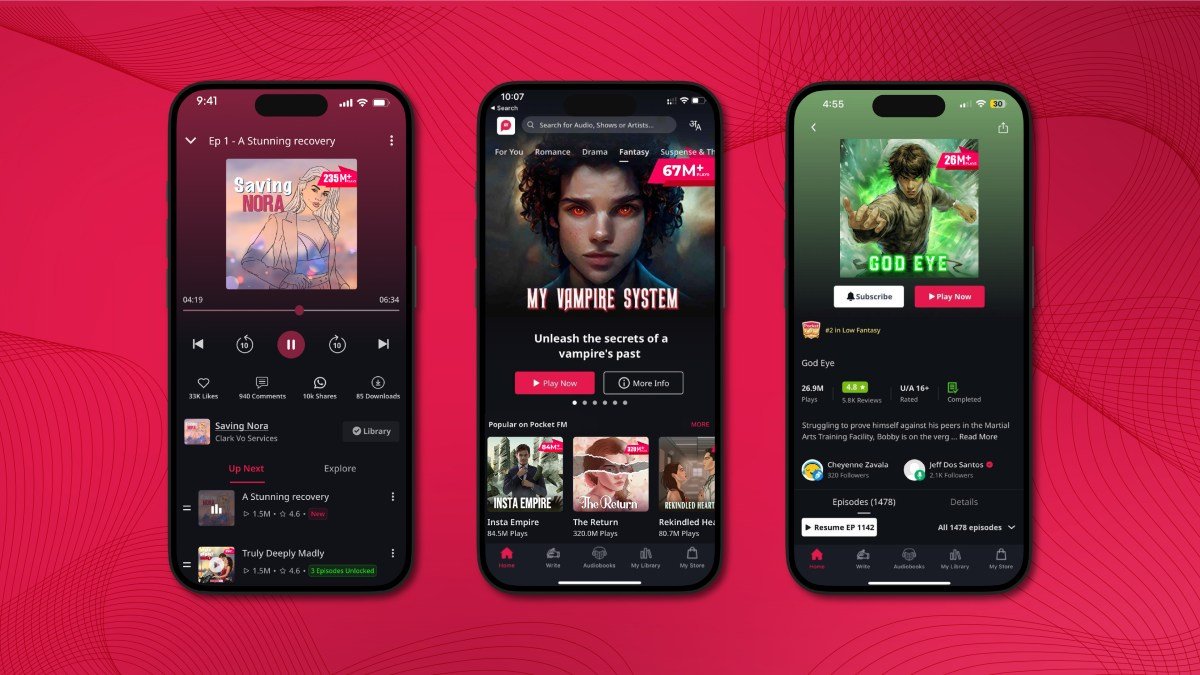

AI chatbot capabilities

Like many AI models, Optum’s chatbot could produce answers beyond its training data, prompting employees to test its capabilities with queries unrelated to insurance claims. While the chatbot demonstrated an ability to generate responses based on its training, Optum confirmed that it was not designed to make any decisions but to enhance access to SOPs.

Legal challenges

UnitedHealth Group, the conglomerate that owns Optum and UnitedHealthcare, has faced criticism and legal action for allegedly using artificial intelligence to deny patient claims. Reports of denied healthcare coverage have led to legal challenges, with accusations of using AI models with high error rates to wrongfully deny care to elderly patients.

Financial impact

Despite these challenges, UnitedHealth Group reported significant profits in 2023, underscoring the importance of addressing concerns related to the use of artificial intelligence in healthcare decision-making.